How a Search Engine Works

Written by: Ryan Jones

Get Started with SERPrecon

Get Started for FreeHow A Search Engine Works

I just got done speaking at a few SEO conferences, and one thing shocked me is that many SEO's mental model of how a search engine works is vastly outdated. Search engines work very differently than how they did just a decade ago, and the SEO industry is still using outdated models and metrics and checklists. In this post we will break down what we learned from the Yandex code leak, the Google API leak, the Google testimony, and our own understanding of information retrieval patents, textbooks, and research. I'm going to try to break it all down in simple english though, so no computer science degree needed.

Components of Search:

For our mental model we will simplify search down into 3 main components:

Crawling

Indexing

Retrieval & Ranking

I grouped retrieval and ranking as one step here because that's how they happen in the search engine code. Retrieval happens before ranking, but they kind of happen at the same time and there can be multiple stages of retrieval - we'll dive into that. We're not going to spend a ton of time on Crawling.

Crawling

Search engines don't really "Crawl" a website in the way that SEOs talk about it. Unlike your actual browser or a tool like screaming frog that follow links, a search engine crawler works from a prioritized list of URLs to fetch. Google crawls using a headless version of Chrome. When it fetches a URL it always comes directly (no referer string, cookie, session, or other state set.). When it discovers new links on the page it doesn't immediately crawl or fetch them - instead it sends them back to the queue to be prioritized. The crawler also sends crawl data back to the indexer for processing.

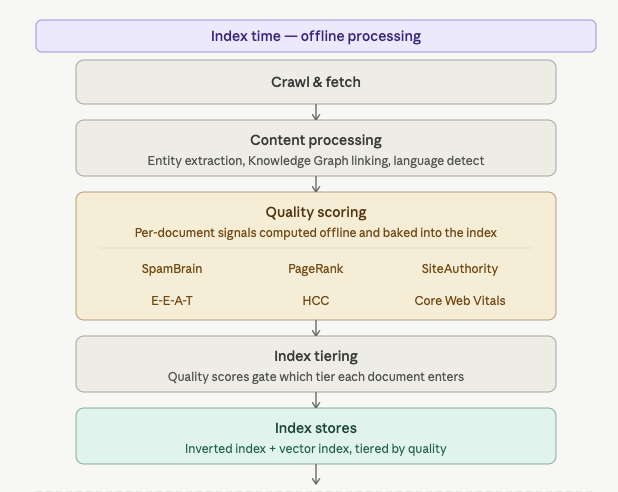

Indexing

Some of these things likely happen in the crawler, but we're making a mental model so I've included them here because it's easier to imagine. The first thing that happens after the crawl is content parsing (this likely happens in the crawl itself) as well as language and location detection, entity detection, knowledge graph linking, and duplicate / near-duplicate / canonical type things.

The indexing layer is also responsible for calculating document based signals that don't rely on the query. Many of these are called quality signals that map into what Google calls Q* - their quality score. Quality signals calculated at indexing time include:

Pagerank and other link based signals

Spam signals like Spambrain - a ML spam classifier. This could exlude you from being indexed and put you into the "crawled, not indexed" category.

Helpful content classifiers.

Core web vitals (Technically done at crawl time and passed to the indexer)

Term extraction.

Various other quality signals (SiteAuthority, metrics related to what we call EEAT, etc)

The index has tiers.

This is kind of an important point. There isn't just one index. There's multiple tiers of the index. In the old days, what tier you went in was determined by your pagerank. That may not be the case anymore. We learned from testimony that the various tiers of the index are based on where the site is stored. We can think of them in terms of memory and hard drive space as a good mental model: Tier1 would be like your RAM. Tier 2 would be solid state drive, and Tier 3 is a slower storage model. These tiers can be based on PageRank and quality scores like the old days, but they're more than likely based on clicks and user data and how often a site is searched for or served up in search. Remember the annoying "crawled not indexed" issue in search console? That's this stage deciding your page doesn't even belong in tier 3. It's important to realize this decision is made even before a user types a query. If you aren't indexed, you can't be returned for any query.

There's also multiple indexes

Search engines maintain multiple types of indexes and both of them are tiered like above. The index we all know about is the traditional inverted index. That's the one that works like the card catalog in the library (you remember libraries right?) Essentially this index is a list of terms and documents that include those terms. They also store some data here - like BM25 - a measure of term frequency that has become the standard in information retrieval. Think of this like a much better version of keyword density with diminishing returns, TF-IDF built in, and document length normalization. It's (relatively) quick and easy to compute. They may further break indexing down into passages, however we're going to keep this mental model higher level.

There's also the vector index. Vectors are embeddings we've all heard so much about. Vectors allow all of the cool tech like neural matching and rankbrain and every other new algorithm to function. Vectors are just a mathematical representation of queries or documents or passages in multi-dimensional space. They allow a search engine to use cosine similarity to find other documents, queries, passages, etc that are similar in meaning by finding ones that are nearby in space.

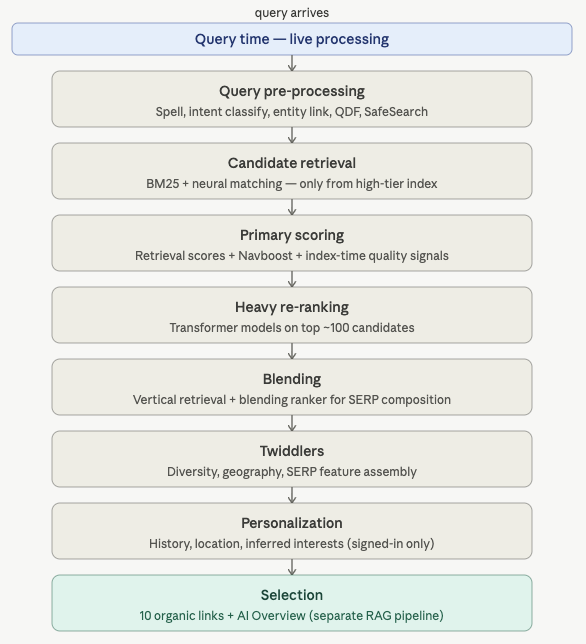

So far, our model kind of looks like this. (Thanks Claude for turning the above paragraphs into an image)

Retrieval and Ranking

This step is where we to spend the most time. I think most SEOs had a solid grasp on crawling and understood most of indexing. Retrieval and Ranking is where all the stuff that's related to the query happens. Let's break down what a search engine does once it gets your query:

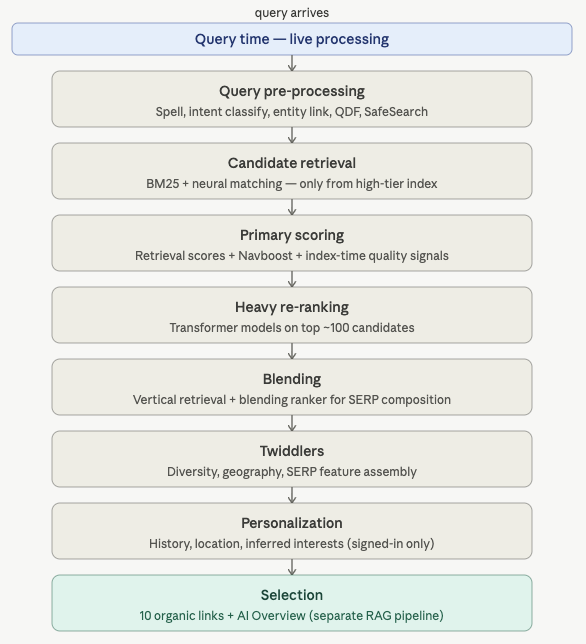

Retrieval and ranking kind of looks like this. Thanks again Claude:

Query Pre-Processing.

Before it even goes to the index a search engine needs to do some stuff with your query. This step includes the following:

Spellcheck.

Intent classification (navigational, informational, transactional, commercial)

Named entity recognition (for knowlege graph lookup) - are you looking for a "thing" or a list of documents?

Query expansion (synonym injection)

QDF - query deserves freshness - can determine how the engine handles retrieval.

SafeSearch classification.

Location detection

Candidate Retrieval

The first step in retrieval is to get a list of candidate sites. Remember our inverted index? The search engine will get a list of all the documents that contain all (and sometime some) of the terms - ordered by BM25. If there are enough documents in the first index tier it moves on to the second step. If not, it will go to tier 2, and then tier 3 until there are enough documents with sufficiently high BM25 scores.

It will then go and get another list of documents from the vector index using Cosine Similarity. This step includes what you might have heard called neural matching, or rank embed, etc. They're getting another set of candidates from the vector index using the same tier logic.

Both of these steps can happen at the same time. The results are then combined into a de-duplicated list with all of the relevant scores.

Initial Scoring (FastRank)

Now that we have our candidate list, it's time to score them. Scoring combines the BM25 and Cosine SImilarity signals with the index time quality signals (pagerank, authority, spam, helpful content, etc) as well as click data from things like Navboost to produce a ranked list of hundreds or sometimes thousands of documents.

Google calls this fastrank becaues it happens in linear time. FastRank is also what's used for LLMs like Gemini. Since AI needs to be quick and really only cares about finding text to support the AI answer, it typically stops here and doesn't apply the other signals. Web search however continues on..

Re-Ranking

Re-ranking is where all the heavy time consuming stuff is done. It happens after retrieval and initial scoring cull the list because mostly everything in re-ranking is done with machine learning - and that isn't fast. This includes things like BERT and RankEmbed and other computationally complex algorithms. We won't go into much detail on how they work aside from any sub system or algorithm that requires looking deeper at the text of the page in context of the query would happen in the re-ranking stage.

Blending & Twiddling

Finally we get to everybody's favorite word: Twiddlers! This is the stage where separate retrieval pipelines are combined. Things like News, Images, Shopping, Video, Maps. The blender (I think Google calls this Glue) scores all of these other verticals to determine if, and where they belong in the search result.

Twiddlers include things like manual actions, domain diversity, DMCA issues, breaking news boosts, official website boosts, etc.

Where does SERPrecon fit in?

SERPrecon focuses on the initial retrieval and relevance metrics that feed into initial search ranking. We measure your BM25, Cosine similarity and intent against everybody else who ranks. We also extract entities and keywords (using BERT - the same as Google) from your competitors and tell you how to improve your relevance scores.

Too many SEO tools focus on outdated metrics or things that don't impact semantic relevance and selection (like pagerank, or page speed.) SERPrecon only uses real metrics used by real search engines - none of the made up stuff like keyword density or domain authority.

SERPrecon focuses on making sure you're relevant enough to be retrieved - so that you can be ranked. Relevance & retrieval is the first step of SEO and GEO - ensuring your relevance optimizations set you up for success no matter where your customers are searching.